Attention is All we Have

Why AI Doesn't Need to Get Smarter to Take Your Job

There’s an overlooked aspect of LLMs that I haven’t seen anyone articulate.

This aspect is so essential, that once you see it, it will change your entire perspective on what AI is and how it’s going to impact the future.

But to understand this, we first need a shared understanding of what we mean by the word “intelligence”.

An elegant definition of intelligence, coined by William James, “Intelligence is the ability to reach the same goal by different means”. By this view, an intelligent system can be measured by the range of goals it can pursue and the variety of methods it can employ to achieve them.

For example, a mouse can satiate its hunger by being clever about what type of food it gathers and the methods it uses to acquire it. Or a thermostat is designed to manage the temperature in a room, and can only use its heating element to do so.

But if we want to understand what is so unique about a mouse versus simple systems like thermostats, we need to understand how these systems differ.

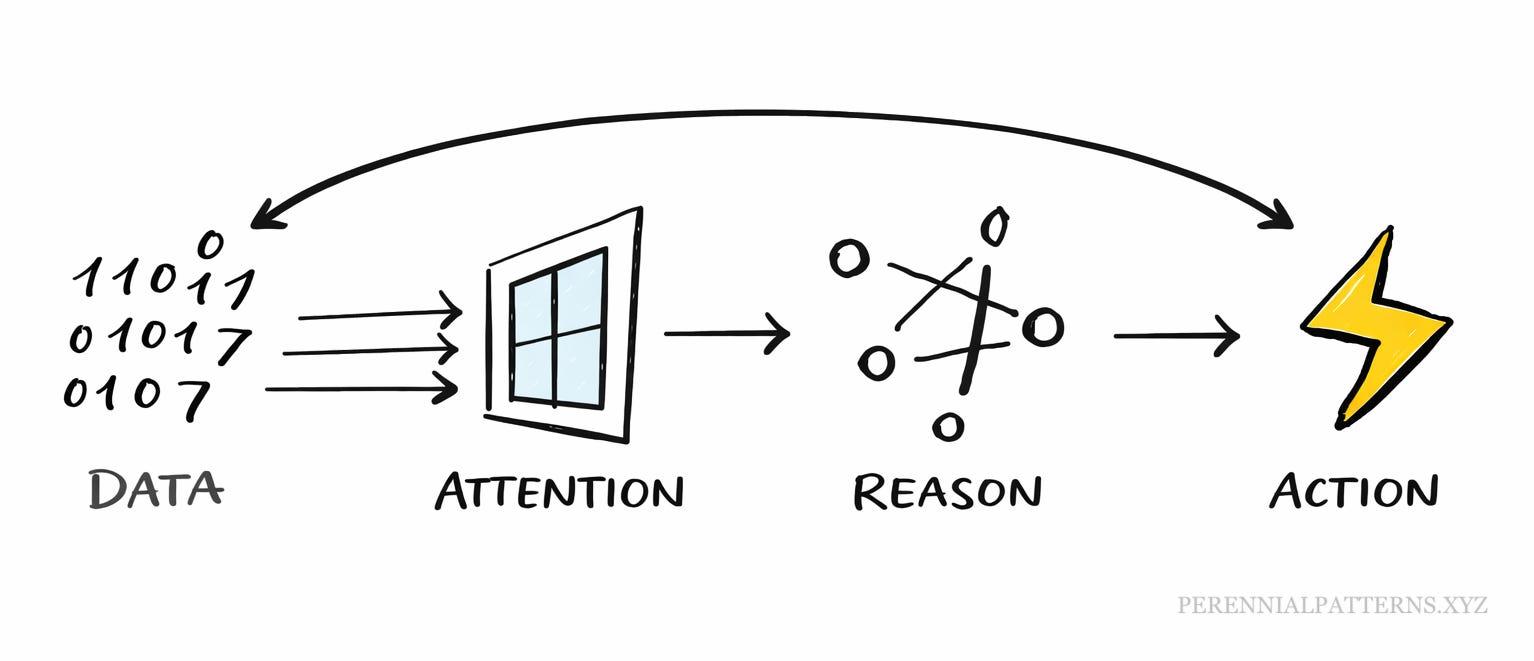

For a thermostat to do its job, it has to perform 4 distinct functions:

Data – Consume raw temperature and user input data.

Attention – Filter out relevant data for reasoning.

Reason – Decide if it should increase or decrease temperature.

Action – Decision is sent to the heating element.

Then the action changes the environment and new data is consumed and the cycle repeats.

This breakdown reveals two interesting things.

The first is that what we usually think of as “intelligence”, the reasoning part of this system, really is just one component required for the system to function.

The second is that to make use of the raw data, the thermostat needs attention to filter out what’s relevant.

In this particular system, the attention function seems somewhat trivial, and therefore overlooked. But I’d argue that this is an equally important part of an intelligent system and it holds the key to what LLMs unlock in human knowledge work.

LLMs are not artificial intelligence, but artificial attention

Before LLMs, if you wanted a computer to find every mention of the word “potato” in a document, you could write a simple program to do it. Ten lines of code, problem solved.

But ask that same program to find every place where the concept of food is mentioned? Now you’re in trouble. “Potato” is easy. But what about “grabbed a bite,” “starving,” “the smell from the kitchen,” or a character preparing for a dinner party? The task explodes in complexity.

Now ask it to do that across images, video, audio, and text simultaneously. You’ve just described an impossible problem, or at least until recently.

What makes LLMs valuable isn’t that they reason better than us. It’s that they can hold a concept in mind and hunt for it across any medium, in any form it might take. They can attend to meaning, not just pattern-match on keywords.

This is exactly what human attention does, the ability to dynamically filter a flood of raw data based on a fuzzy, contextual idea of what matters to the aim in mind.

But unlike human attention, it doesn’t get tired or distracted. The third hour of contract review is as good as the first. LLMs bring the same attention to page one thousand as they do to page one.

For the first time in history, we have inexhaustible artificial attention.

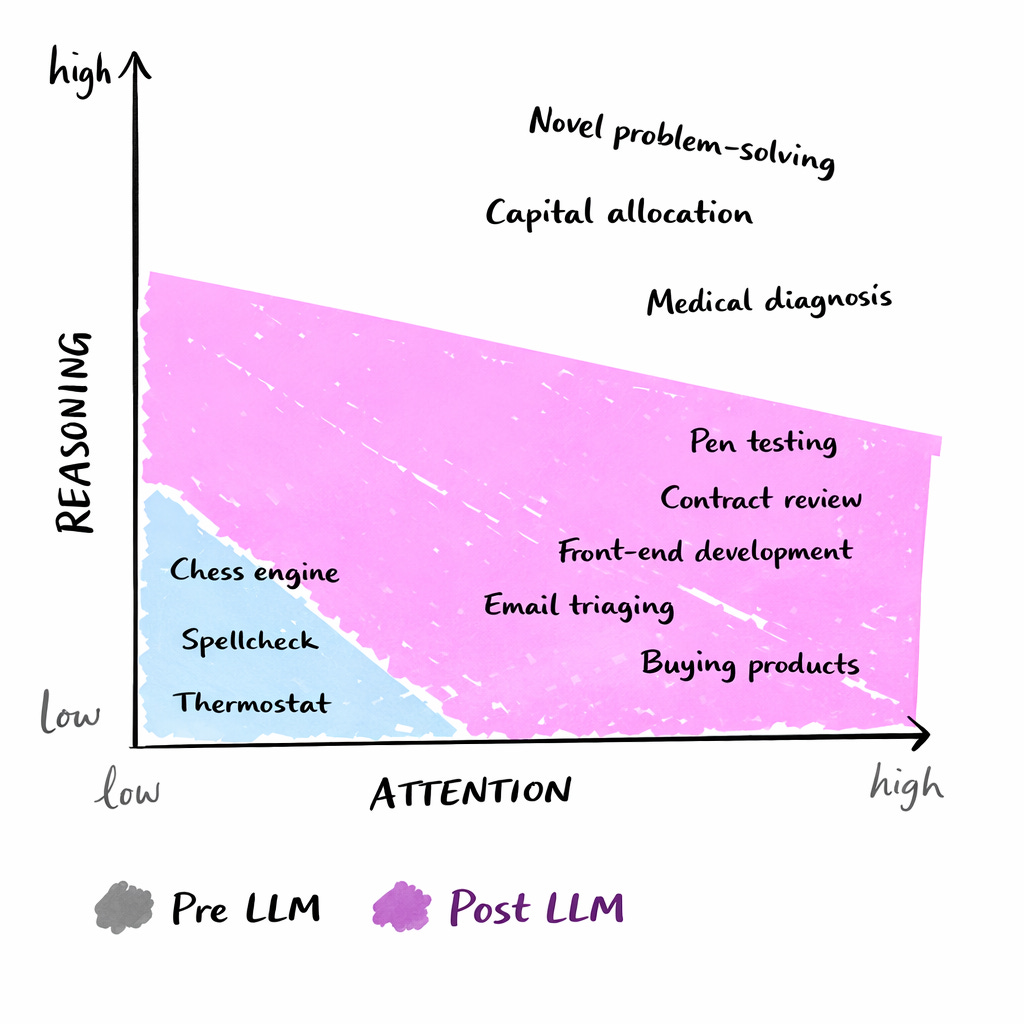

Most knowledge work is low reasoning and high attention

It’s worth noting that LLMs can reason, and its ability to do it so fluidly is novel. But if we’re going to be honest, for any specific problem, a purpose-built program will usually outperform them.

A trading algorithm will beat an LLM at trading. A chess engine will beat an LLM at chess.

What LLMs offer isn’t better reasoning. It’s reasoning that can be applied to anything, because they can attend to anything.

And it turns out that for most knowledge work, reasoning was never the bottleneck. Attention was.

Just look at how much knowledge work across industries require attention rather than reasoning:

Legal: Contract review—scanning hundreds of pages to flag non-standard clauses when the reasoning about whether a clause is problematic is straightforward once found.

Medical: Radiology—maintaining focus across dozens of scans to catch the one anomaly, when radiologists already know exactly what a tumor looks like.

Finance: Reconciliation—matching records across systems like bank statements to internal ledgers, checking for discrepancies.

Research: Literature review—reading dozens of papers to find the handful actually relevant to your question.

Software: Log analysis—sifting through thousands of lines of system logs to find the error explaining an outage.

Administrative: Email triage—deciding across a full inbox what needs a response, what can be delegated, what can be ignored.

In each of these cases, the reasoning is often as simple as if this, then that. The hard part is digesting enough information to know when this applies.

Attention is the bottleneck. Not reasoning.

The cost of attention just dropped by ~99.7%

To understand the impact of this on the economy, it’s worth contextualising this in numbers.

The average knowledge worker earns $75,000 a year. But how many hours of real, focused attention do they have each day? Not time in the office. Time when they’re actually locked in. Conservatively, it’s about two hours. The rest disappears into meetings, email, context-switching, and the afternoon fog.

Two hours × 250 working days = 500 hours of peak attention per year.

$75,000 ÷ 500 hours = $150 per hour of high-quality human attention.

In that hour, we can assume a focused worker could process about 10,000 words, or 40 pages.

That’s $15 per 1,000 words.

A top LLM can process the same 1,000 words for about $0.05.

That’s 300× cheaper. A 99.7% drop in the cost of human like attention.

Implications of artificial attention

When you look at it like this, you can assume that the invisible hand of the market is likely to slowly replace all the work that requires human like attention with AI.

The incentives are so large that it’s just a matter of time.

My suspicion is that this will happen in a particular order, where high-attention low-reasoning tasks go first.

Things like buying a product on Amazon, booking travel, or comparing insurance quotes. Over time, as models improve, they’ll climb the ladder toward higher-stakes, higher-reasoning decisions.

The downstream consequence of this is that the attention economy as we know it is changing. Large parts of the internet are monetized by capturing human attention and selling ads against it.

Anything someone uses the internet for that doesn’t entertain them is likely to be consumed by AI agents.

Another implication is that there are types of work that exist that before were not worth doing because the cost of attention was simply too high.

What business ideas become feasible when you can have a firehose of cheap attention?

These are just some of the questions that result from this lens, but I’m curious to hear what other people come up with.

If you have ideas or insights, please comment below.